Launching at RSA 2026: AQtive Guard Expands AI Security Posture Management Capabilities

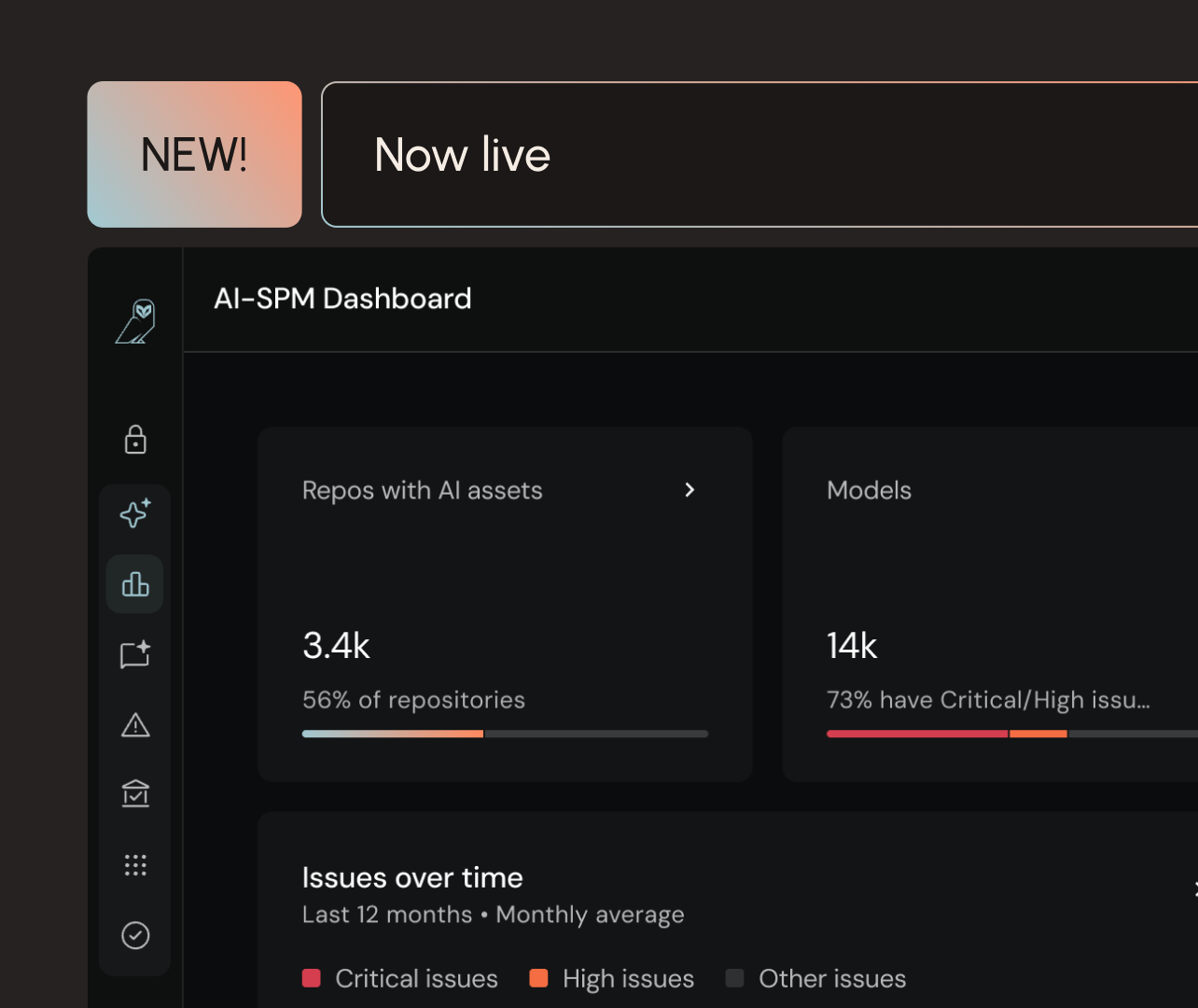

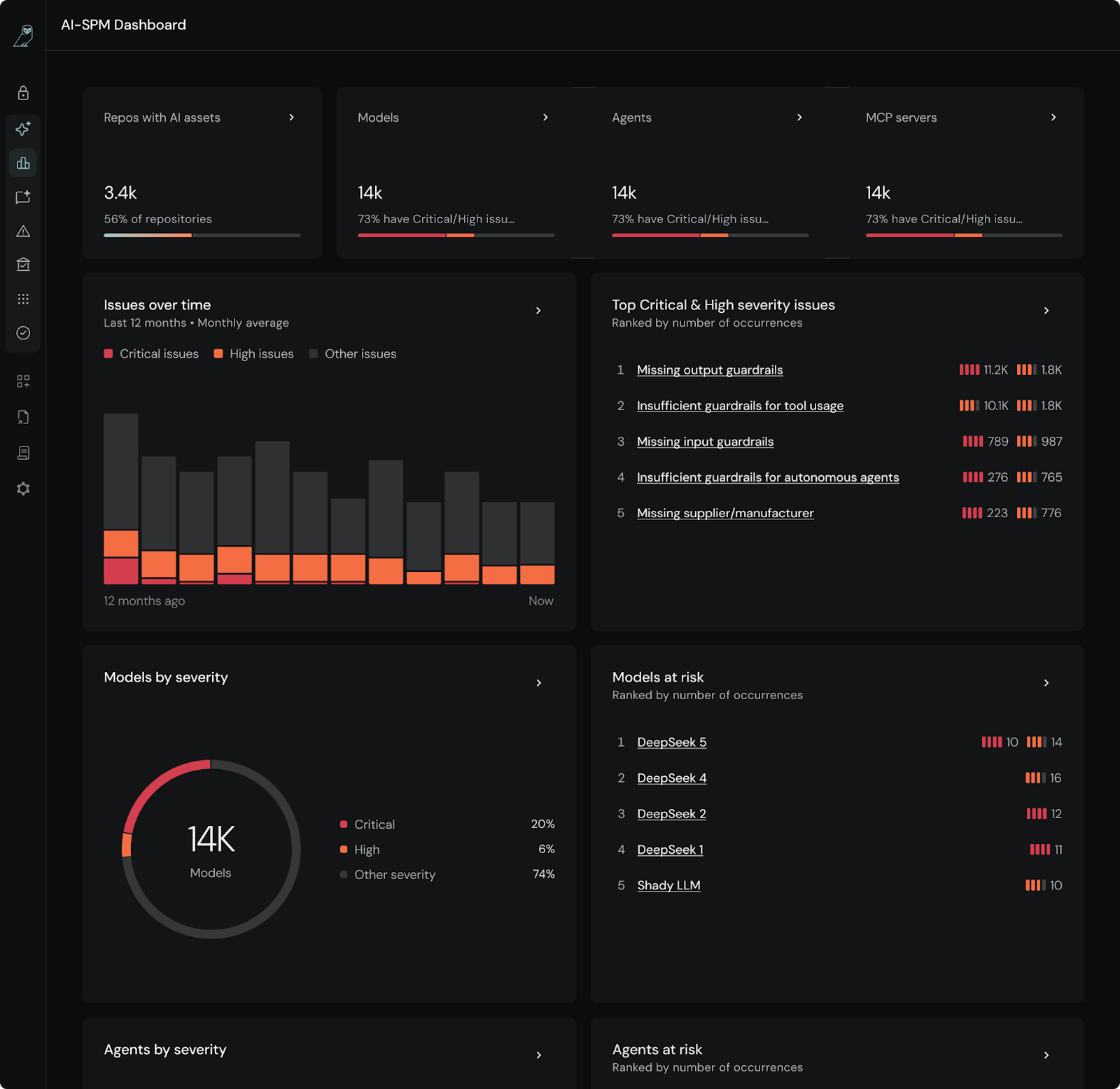

The capabilities shipping in AQtive Guard at RSA 2026 are built around a single premise: real visibility and real control over AI systems in production. AQtive Guard’s AI-SPM covers everything from AI usage in browsers to risk analysis of models and MCP servers for the AI that is being consumed and the AI being built by your teams.

The capabilities shipping in AQtive Guard at RSA 2026 are built around a single premise, real visibility and real control over AI systems in production. AQtive Guard AI-SPM secures both the AI your teams build (models, MCP servers, and agents) and the AI tools your teams use. The platform discovers and analyzes AI assets while providing runtime protection to safeguard interactions with those agents and third-party tools, with comprehensive risk analysis across your AI ecosystem.

Here's what's new and what it changes about how your program actually runs.

What's released:

- Runtime Protection — guardrails enforcement on every AI interaction, no code changes required

- MCP Server risk analysis — Automated security evaluation of MCP connectors across your environment

- Shadow AI discovery — Cloud scanning to surface AI models and agents that bypassed formal deployment

- Shift-left AI security — Static analysis and CI/CD integration for GitHub and GitLab

- AI Governance reporting — Continuously updated posture reports mapped to EU AI Act, NIST AI RMF, and other compliance frameworks

AI runtime protection: guardrails enforcement without code changes

Most organizations have guidelines for how AI applications should handle sensitive data. What they don't have is enforcement. AI-powered applications in production are trusted to behave within bounds that nobody is actively verifying.

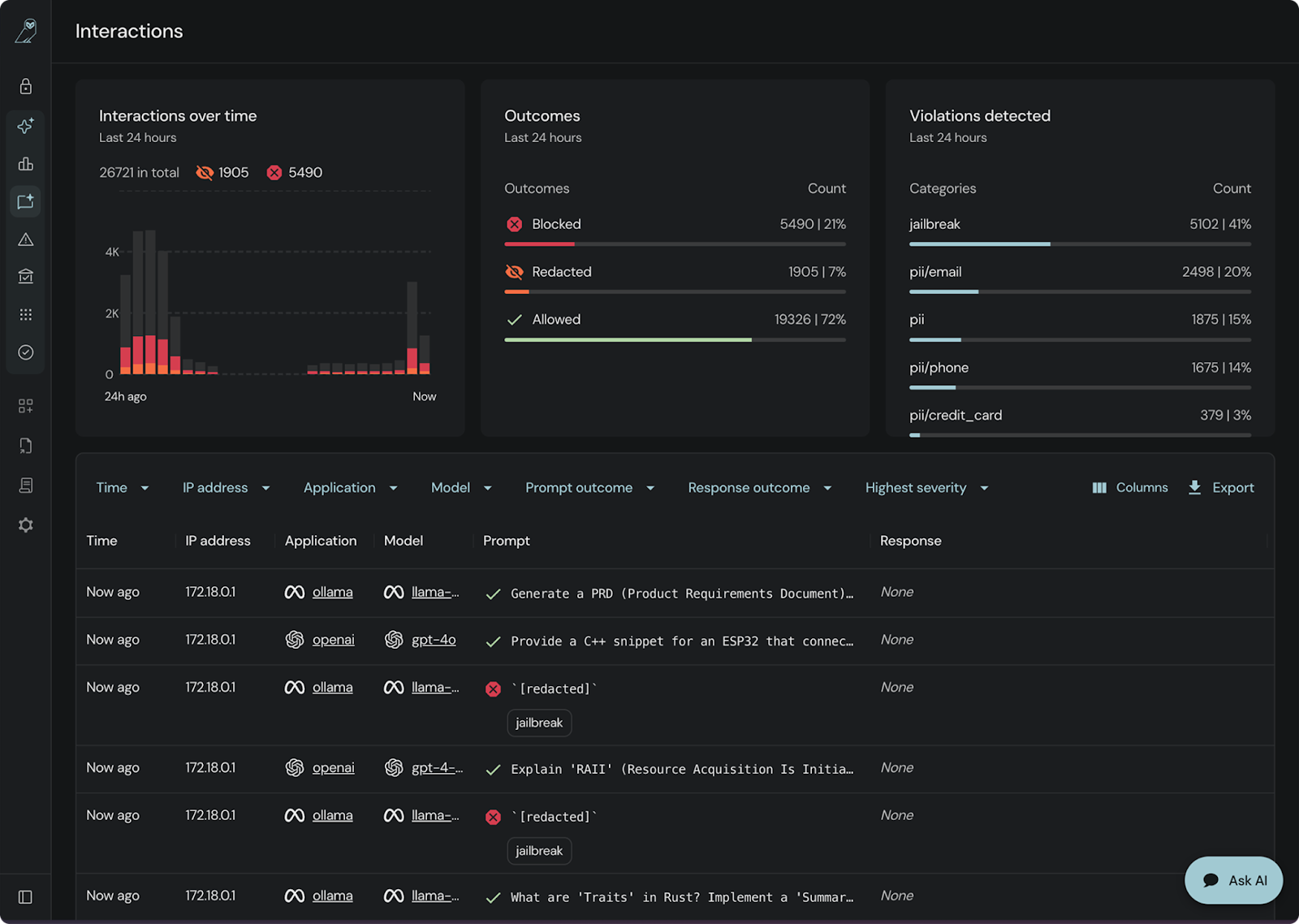

AQtive Guard's new runtime protection capability applies guardrails, on every prompt in, every response out. Policies run automatically against AI interactions without requiring application code changes.

You configure what safe looks like for each application: which inputs get blocked, what data can't appear in outputs, how violations are handled. The same enforcement that would take a security engineer weeks to implement manually across dozens of applications runs continuously from day one.

An AI usage dashboard provides real-time visibility into what is being blocked, what is being permitted, and where policies are being enforced. A policy that exists only on paper and one that actually runs in production are rarely the same. From web-based AI apps like Gemini or ChatGPT to CLI-based tools like Claude, and even custom agents built through local gateways, AQtive Guard provides full visibility.

AQtive Guard Interactions show which prompts have been blocked to prevent jailbreaks, data leakage and more.

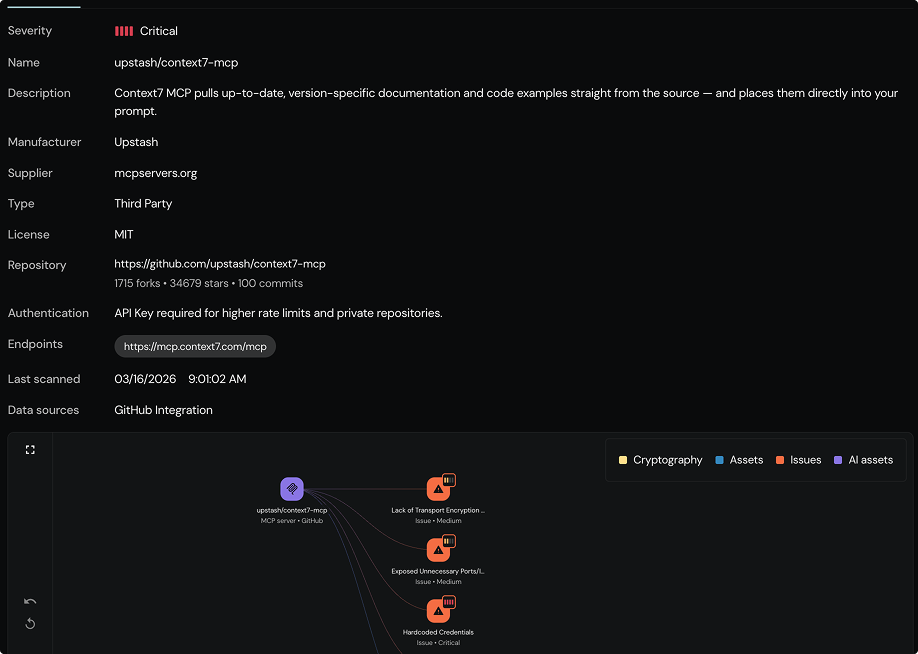

MCP server security: Automated risk analysis when review can't keep up

MCP (Model Context Protocol) servers act as connectors between AI agents and enterprise systems, giving agents access to internal APIs, databases, and tooling. As more teams build agentic workflows, MCP servers are becoming a primary way AI interacts with sensitive enterprise infrastructure. They're also becoming a blind spot.

Most security teams don't have a systematic process for evaluating whether MCP connectors are safe, overprivileged, or malicious.

AQtive Guard now runs automated risk analysis against MCP servers in your environment. An autonomous security agent evaluates configuration and behavior, surfaces risks tied to malicious tooling, excessive permissions, and prompt injection attacks, and gives you a prioritized view of what needs attention.

AQtive Guard evaluates, prioritizes, and maps MCP risk.

The MCP ecosystem is growing faster than any security team can manually review it. This closes that gap.

Shadow AI discovery: Surface unknown models, apps, and agents in your environment

Visibility into what your team officially provisioned is only half the picture.

The harder-to-see half, and often the more relevant one, is the AI that showed up in your cloud environment outside that process: models deployed by developers moving fast, tools that quietly expanded their capabilities, workloads that bypassed the review cycle entirely.

And it's not just shadow deployments, models can arrive through supply chains nobody vetted, carrying risks that aren't visible from the outside.

This is where AI security posture management diverges from CSPM. CSPM monitors cloud infrastructure configuration. AI-SPM focuses on what's running on that infrastructure, the models, agents, and pipelines that interact with your data.

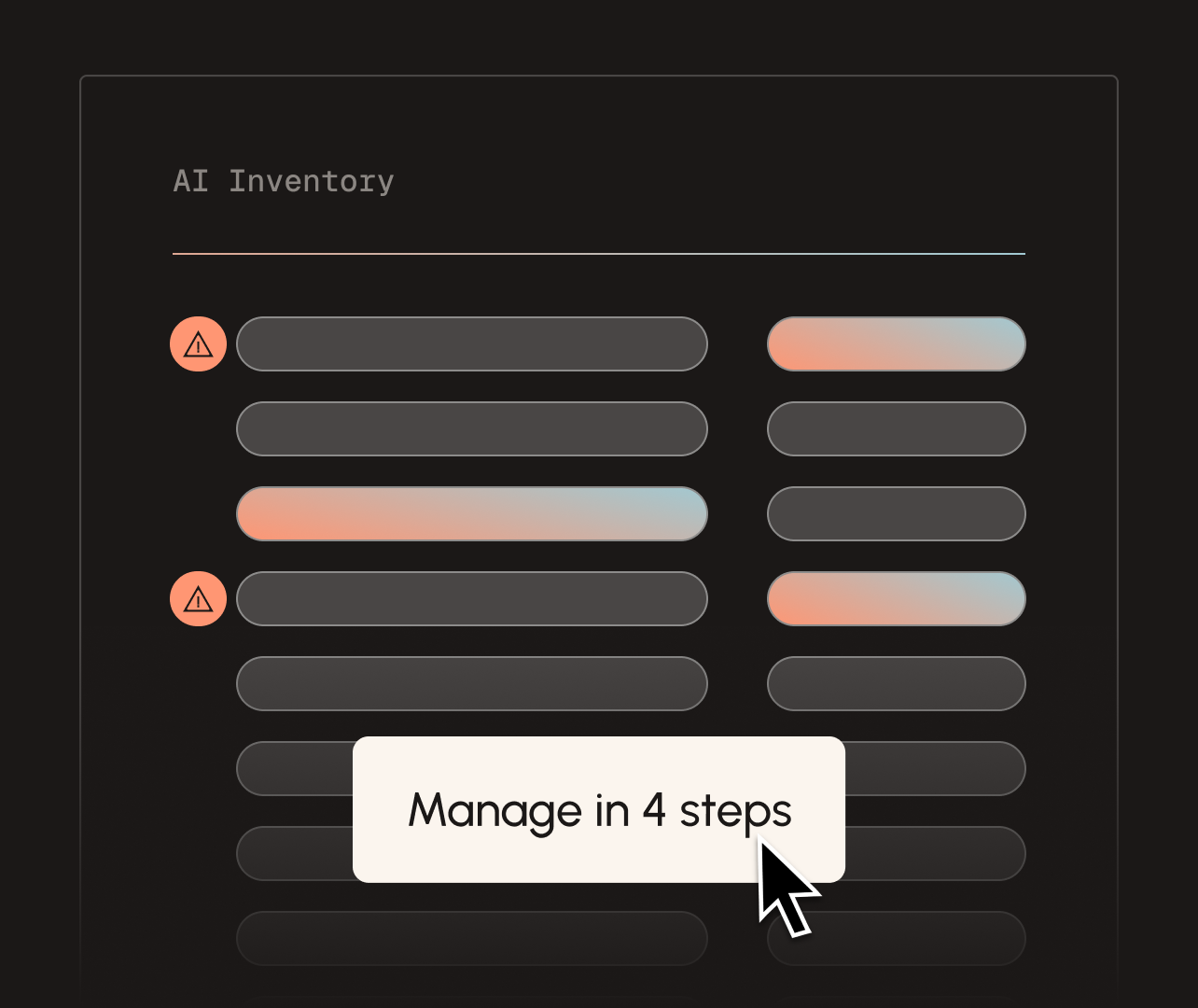

AQtive Guard now scans AWS environments to surface deployed AI models and agents running across your cloud estate, including the ones that weren't provisioned through formal channels. Shadow AI stops being invisible and enters a catalogued, tracked inventory.

AQtive Guard provides complete AI visibility across repos, models, agents and MCP servers.

Now you can manage AI-powered applications distinctly from the rest of your software estate, with ownership attribution attached, so routing a remediation task takes seconds rather than a Slack thread trying to find who owns a zombie agent.

Shift-Left AI security: Catch vulnerabilities before they merge

AI security issues found in production are expensive. The model is already connected to live data, developers have moved on, and remediation requires coordination across teams. The same issue caught at the PR stage takes minutes to fix.

Static analysis now runs in the developer workflow, catching hardcoded secrets, insecure model configurations, and vulnerable dependencies in AI codebases before they merge. Enterprise GitHub integration supports org-level scanning, so findings surface where developers are already working rather than in a separate security console.

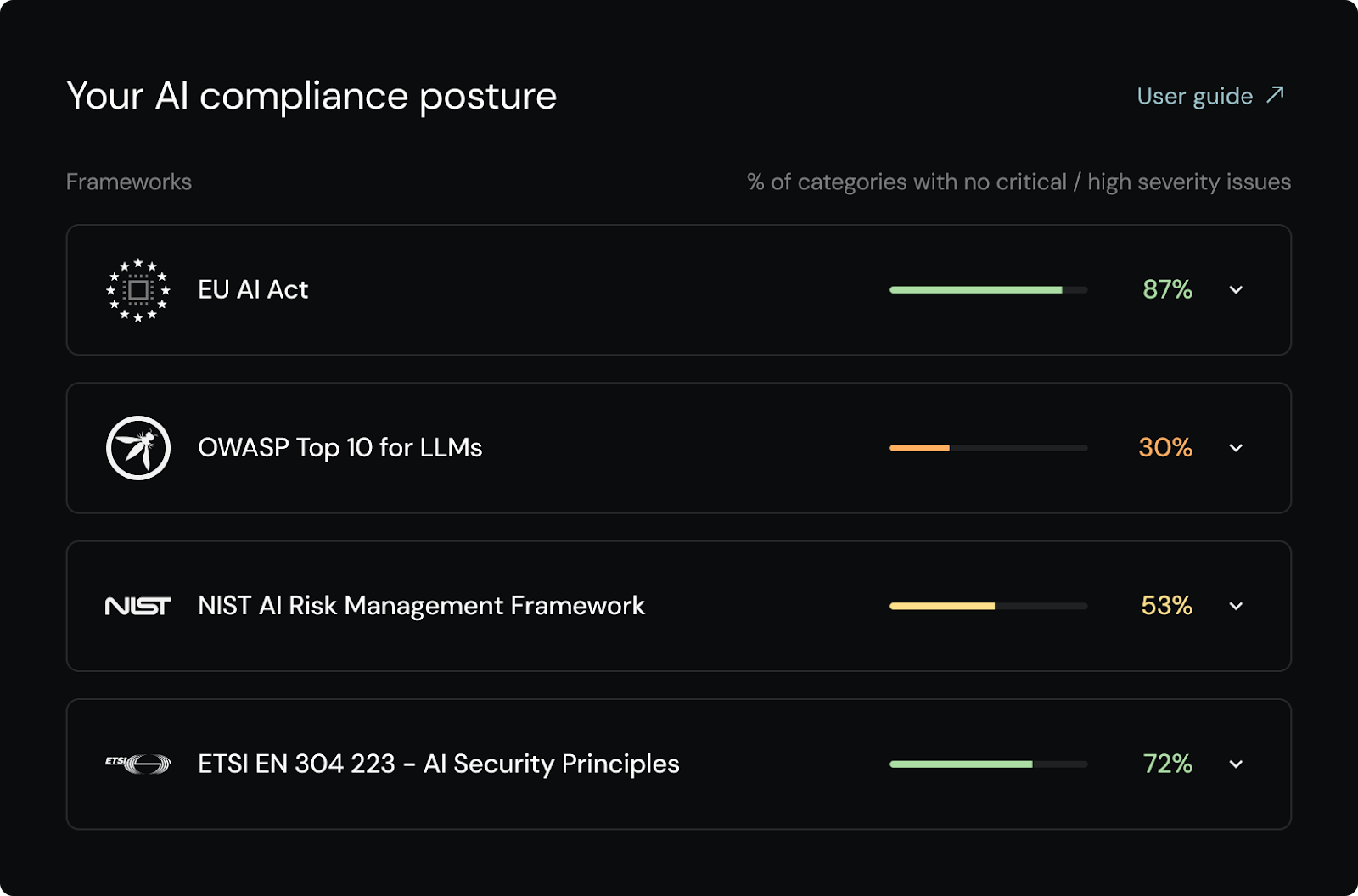

AI governance reporting: Map posture to EU AI Act and NIST AI RMF

When an auditor asks for evidence that your AI applications are operating within policy, pulling that together manually, from multiple tools, across multiple teams, takes time most AppSec engineers don't have. The posture report becomes a project of its own.

AQtive Guard's Posture Reporting gives you a continuously updated view of AI risk mapped to EU AI Act and NIST AI RMF, producible in seconds rather than assembled over a week from five different sources.

AQtive Guard helps map AI assets to compliance standards.

Findings route into existing issue trackers so remediation stays in your workflow, not a separate process layered on top of it.

How AI-SPM works end-to-end: From discovery to enforcement

These capabilities don't operate independently. Discovery feeds inventory. Inventory informs risk scoring. Risk scoring shapes guardrail policy. Guardrails generate enforcement data. Enforcement data feeds posture reports.

That feedback loop, discovery, inventory, risk scoring, enforcement, reporting, is what AI security posture management is supposed to look like in practice. This release moves it closer to operational reality for security teams that have been stitching together point solutions to approximate it.

See the capabilities live at RSA Conference, including demos of AI runtime guardrails and AI system discovery, at the SandboxAQ booth (Booth #S-2027).

Schedule time ahead of the show at aqtiveguard.com/events/rsac-2026 or request an AI-SPM demo!