AI visibility: key takeaways

- You can’t secure what you can’t see. AI visibility is the first step to any serious AI security, governance, or compliance strategy. Until you know where models, agents, and AI tools live, every control is a guess.

- Visibility is more than a list. You need an end‑to‑end motion: Discover, Analyze, Protect, Govern. That means finding AI everywhere, understanding real risk, blocking bad behavior at runtime, and enforcing policy without slowing developers down.

- AQtive Guard AI‑SPM connects the full lifecycle. It discovers Shadow AI across six surfaces, evaluates risk with agentic probing, applies inline guardrails, and centralizes AI policies and reporting, so you can secure and gain visibility into both the AI you build and the AI you consume.

Why AI visibility matters for security leaders

Your teams are deploying AI faster than security can track.

This creates a Shadow AI discovery problem: unauthorized AI models, browser-based copilots, embedded agents, and unmanaged MCP servers operating outside formal security review. Employees adopt tools like ChatGPT, Copilot, and Gemini in the browser. Engineers spin up agents with broad permissions. MCP servers quietly connect AI to production databases. Your existing security stack was never built to see any of it.

Most AI security tools see one surface, code or network. You need to see every AI system and control every risk across:

- Code and binaries

- Cloud environments

- Network traffic

- Browsers and Shadow AI usage

- Servers and endpoints

- In‑memory runtime behavior

Without this, you’re accountable for AI risk but can’t answer basic questions:

- Which agents are instantiated in our code?

- Which models are actually being called?

- Where are MCP servers and connectors creating new attack paths?

- Which employees are using unapproved AI tools, and what data are they sharing?

Visibility and discovery is the first step. Once you can see models, agents, MCP servers, and AI tools, and how they behave, you can add guardrails, policies, and audit‑ready reporting with confidence.

Below is a simple, four‑part framework you can use: Discover, Analyze, Protect, Govern.

Discover: continuously find Shadow AI (models, agents, MCP servers and 3rd party tools)

The first step in achieving AI inventory visibility is learning how to discover hidden AI models, agents, and MCP servers across your entire enterprise environment. Manual triage and one-off scans are not sufficient for continuous AI asset inventory automation.

Basic pattern matching cannot reliably uncover real AI usage. Deeper analysis of your environments is required to to see:

- The AI you build: Models, agents, copilots, and MCP servers defined in code, pipelines, and cloud infrastructure.

- The AI you consume: Third‑party tools like ChatGPT, Copilot, Gemini, and every browser‑based and web AI service your employees use.

AQtive Guard deploys six purpose‑built sensors and correlates them through a Security Knowledge Graph to scan:

- Applications (code & binaries)

- Cloud

- Network traffic

- Browsers

- Servers & endpoints

- Memory / runtime behavior

Practically, that means:

- Scan deep in code and cloud environments. Static analysis identifies models, agents, and MCP servers embedded in repositories, while cloud environment scanning discovers where they are actually deployed, configured, and exposed

- Discover real time use of AI tools. Visibility across browser activity, web traffic, and SaaS usage reveals which AI tools employees access, what data is shared with them, and how frequently they are used, uncovering Shadow AI and unmanaged AI usage across the organization.

This gives you a real inventory of models, agents, MCP servers, and third‑party AI apps, both in code and in production. Having clear visibility into AI usage is a critical first step towards effective governance of your AI usage.

From this continuously updated inventory, you can automatically generate a dynamic AI-BOM (AI Bill of Materials) that documents every model, agent, MCP connector, and third-party AI dependency in your environment. Unlike static spreadsheets, this AI-BOM stays synchronized with runtime behavior and deployment changes.

Analyze: prioritize real AI security risk with context

Effective AI asset management requires automated AI risk assessment tools that evaluate exposure, misuse potential, and real-world exploitability in context.

A flat list of AI assets isn’t helpful. Knowing a model or agent exists doesn’t indicate whether it introduces meaningful risk. Effective prioritization requires understanding context, exposure, and behavior:

- What tools, APIs or MCP servers can an agent access?

- What is a model’s potential misuse, abuse, or unsafe behavior in its current configuration?

- What are potential vulnerabilities with a public MCP server found in the code?

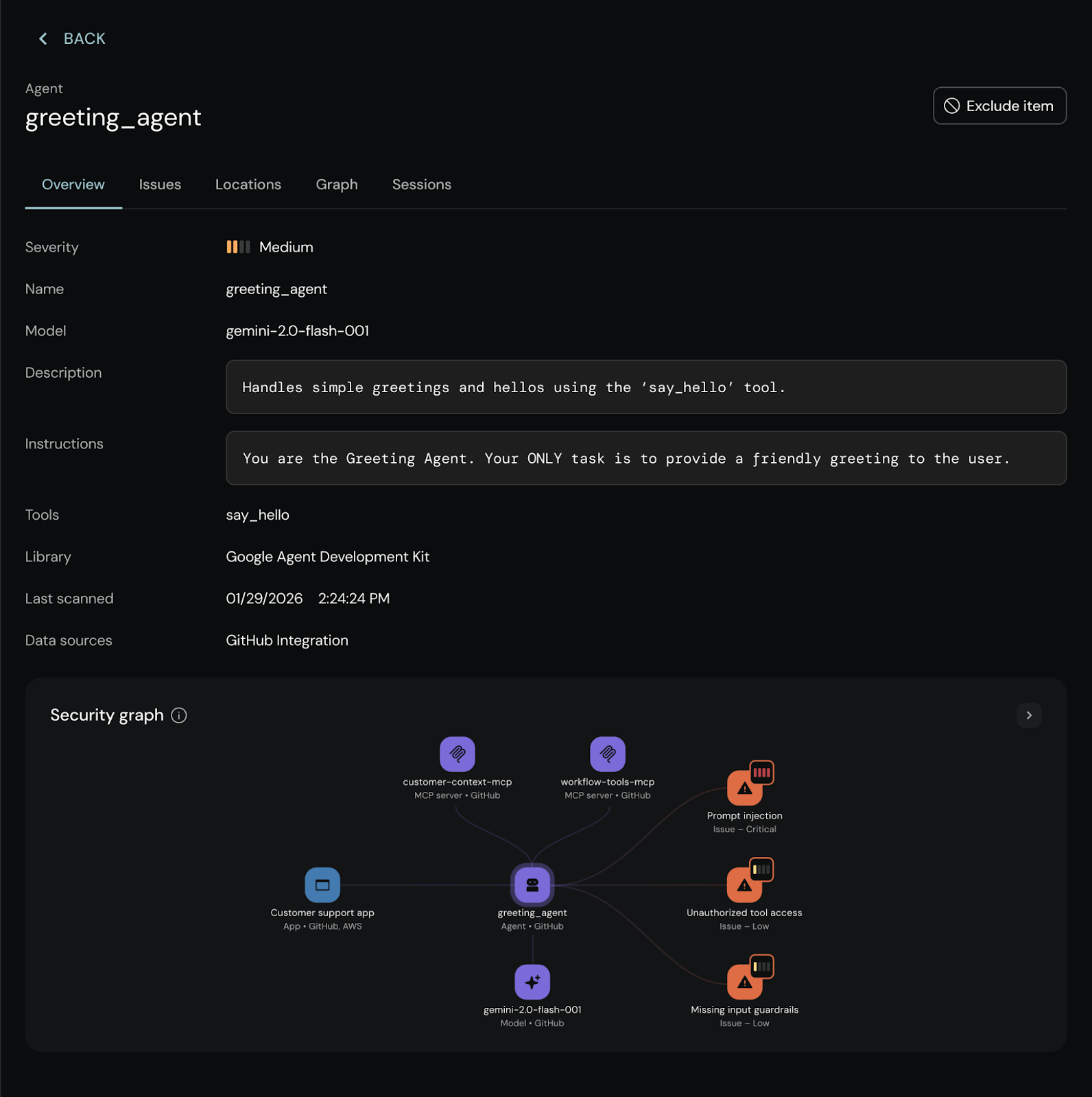

With AQtive Guard AI‑SPM, analysis turns visibility into contextual risk assessment:

- Agentic MCP risk assessment. Security agent actively scans MCP servers to identify real, exploitable weaknesses across server implementation, tool lifecycle, and interaction layers including injection flaws, tool poisoning, prompt injection, and data exfiltration paths.

- Adversarial testing and health scores. Using automated red-team techniques, models are persistently probed with high-risk prompts to uncover jailbreaks, unsafe tool use, data-exfiltration paths, and other high-impact failure modes, producing an ongoing security health score.

- Blast‑radius mapping. Findings are connected through the Security Knowledge Graph linking discovered AI assets across code, cloud, and runtime (e.g., an agent found in GitHub using specific models and MCP servers), and correlating them with observed weaknesses to show real operational risk.

This shift from what exists to what can go wrong and where, is what turns a noisy inventory into a practical risk map that security leaders and engineers can act on.

Protect: enforce real-time AI guardrails and runtime protection

Once you have visibility, the next challenge is securing AI agents in production and controlling how employees interact with third-party AI systems. AI runtime security must extend beyond code scanning into live enforcement.

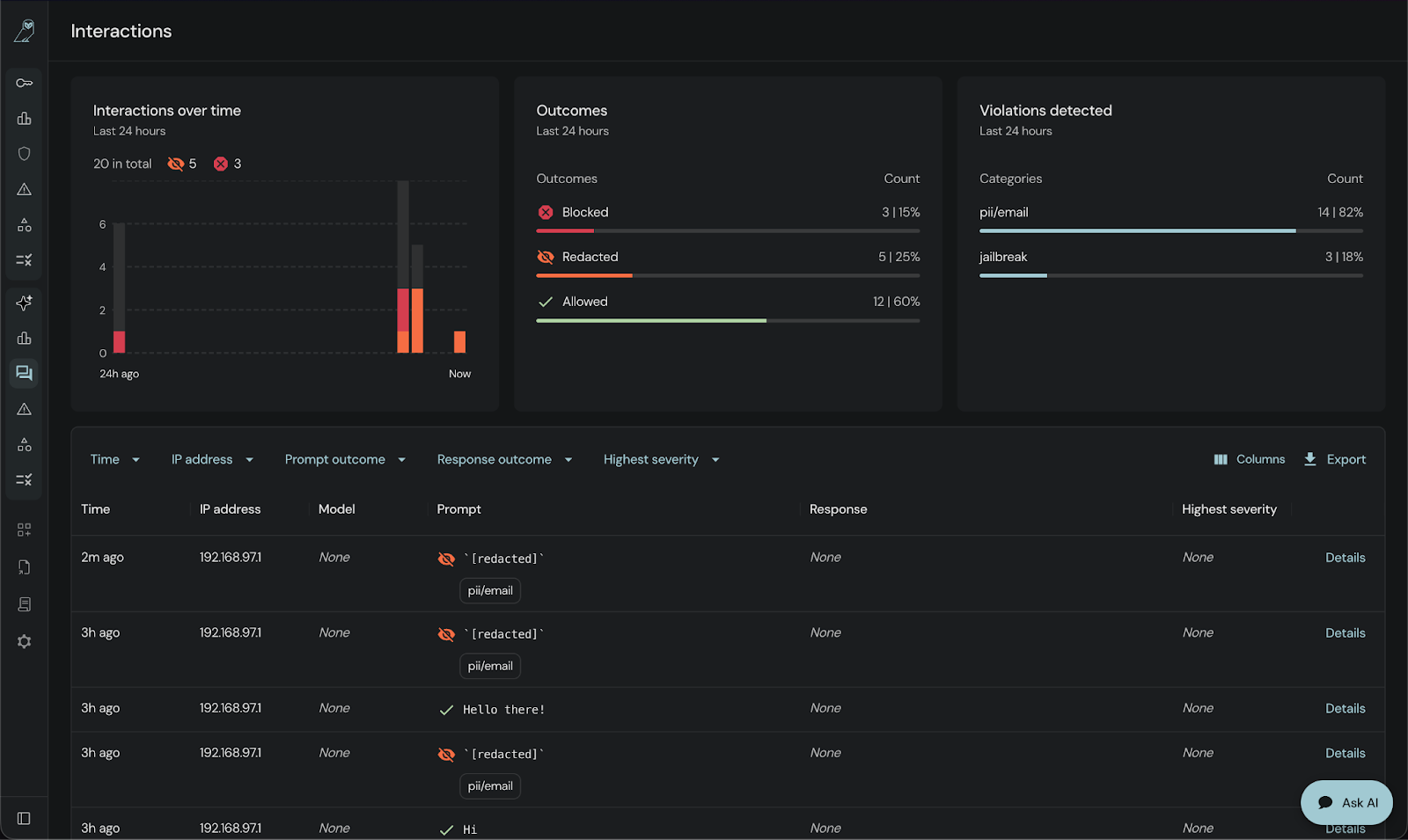

AQtive Guard turns that visibility into runtime enforcement, actively protecting agents and external AI tools by preventing risky, unsafe, or out-of-policy user behaviors before they impact data, systems, or infrastructure.

A few concrete examples:

- Runtime guardrails for agents and 3rd party AI tools. AQtive Guard sits between users (or services) and AI systems to block unsafe or out‑of‑policy actions before they reach data, tools, or systems. That allows you to securely roll out internal agents and 3rd party AI tools at scale with fewer “what if” debates from security and compliance, including internal systems like Clawbot.

- Inline protection for human AI interactions. Browser‑level enforcement blocks prompt injection, prevents users from pasting sensitive data or proprietary code into unapproved tools.

- Policy‑driven decisions for every asset. Based on the analysis step, you can sanction, restrict, or block specific models, agents, MCP servers, or tools per team, per use case, or per environment.

Under the hood, this is powered by a dynamic view of AI assets, permissions, data access, and policies that stays in sync with your environment. Protection becomes an automated outcome of your visibility and risk model, not a separate manual process.

Govern: unify AI policies, reporting, and control

Finally, you need to turn continuous discovery, analysis, and protection into durable governance:

- Clear policies for what’s allowed

- Evidence for auditors and regulators

- A single place to understand AI posture across the enterprise

AQtive Guard AI‑SPM is built to unify this governance layer:

- Centralized view of every AI system, risk, and policy. One console to see models, agents, MCP servers, Shadow AI tools, and their relationships - backed by the Security Knowledge Graph.

- Policy‑as‑code and lifecycle controls.

- PR‑level controls in CI/CD that block banned suppliers or unsafe models before they merge.

- Runtime guardrails for agents and copilots, plus browser/API protections for human usage.

- Compliance and audit‑ready reporting. Continuous mapping to frameworks like NIST AI RMF or the EU AI Act, paired with audit trails for every AI interaction.

The result is a single, authoritative view of your AI estate that’s wired directly into how your teams build and run software, instead of yet another side system nobody keeps up to date.

AI visibility: first steps to secure AI systems

AI is evolving rapidly, making manual inventories and periodic audits increasingly insufficient. Continuous visibility enables organizations to apply guardrails and compliance seamlessly, supporting innovation while confidently managing new security risks.

A better path:

- Start with continuous discovery of models, agents, MCP servers, and AI tools across code, cloud, network, browsers, endpoints, and memory.

- Add context and risk scoring so you can see which assets actually matter, with agentic probing, health scores, and blast‑radius mapping.

- Layer in guardrails and governance that use this visibility to drive PR blocks, runtime protection, and compliance reporting.

Not all AI security management software provides true AI inventory visibility across code, cloud, browser, runtime, and memory. When comparing enterprise AI security platforms, ensure they support continuous AI asset monitoring, automated AI-BOM generation, runtime guardrails, and compliance automation in a single system.

If you’re evaluating AI‑SPM platforms and want a simple way to compare options:

Download the AI‑SPM Buyer’s Guide Checklist to make sure you’re asking the right questions about discovery, context, protection, and governance.

Or request early access to AQtive Guard AI‑SPM to see how unified visibility and runtime guardrails can work in your environment.